·

May 27, 2026

What happens when you build a design system from v0, Figma, and Windsurf, and let AI handle the speed while you keep the judgment.

12 read time

Just this month, I built a full design system in about 20 hours.

What used to take weeks, sometimes months, is now dramatically faster. So… what actually changed? And more importantly: what didn’t?

Design systems take time. On complex platforms, they can take hundreds of hours.

We were working with a large and complex product where inconsistencies had started to pile up. Different modules had evolved in isolation, teams were making independent decisions, and there were no shared guidelines. The answer was clear: we needed a design system.

AI tools were just starting to emerge back then. They were mostly useful for simple tasks as they tended to hallucinate when things got complex. Developers had started using them earlier than designers, MCP didn't exist yet, and Figma plugins were the best automation we had.

But the context has changed. Fast.

The Manual Era

We did what most teams did. We stopped, and we built it. Manually.

Picture two designers, a mountain of inconsistencies, and no map. We had to cross-reference information manually, digging through the code, detecting what could be merged, agreeing on naming conventions, deciding how to name components. Hours and hours of discussion until we finally landed on a solution.

In the end, we got there. A cleaner system, faster workflows, and for the first time, both teams speaking the same visual language. Hard-won, but it worked.

But now every month a new AI model seems to be released. Design is finally catching up with what developers faced about two years ago. New tools arose, and with that, the scope of our work as designers completely changed.

The Human Factor

For an internal project, I used our Kaizen site as a reference, combined with documentation from industry leaders as a guideline.

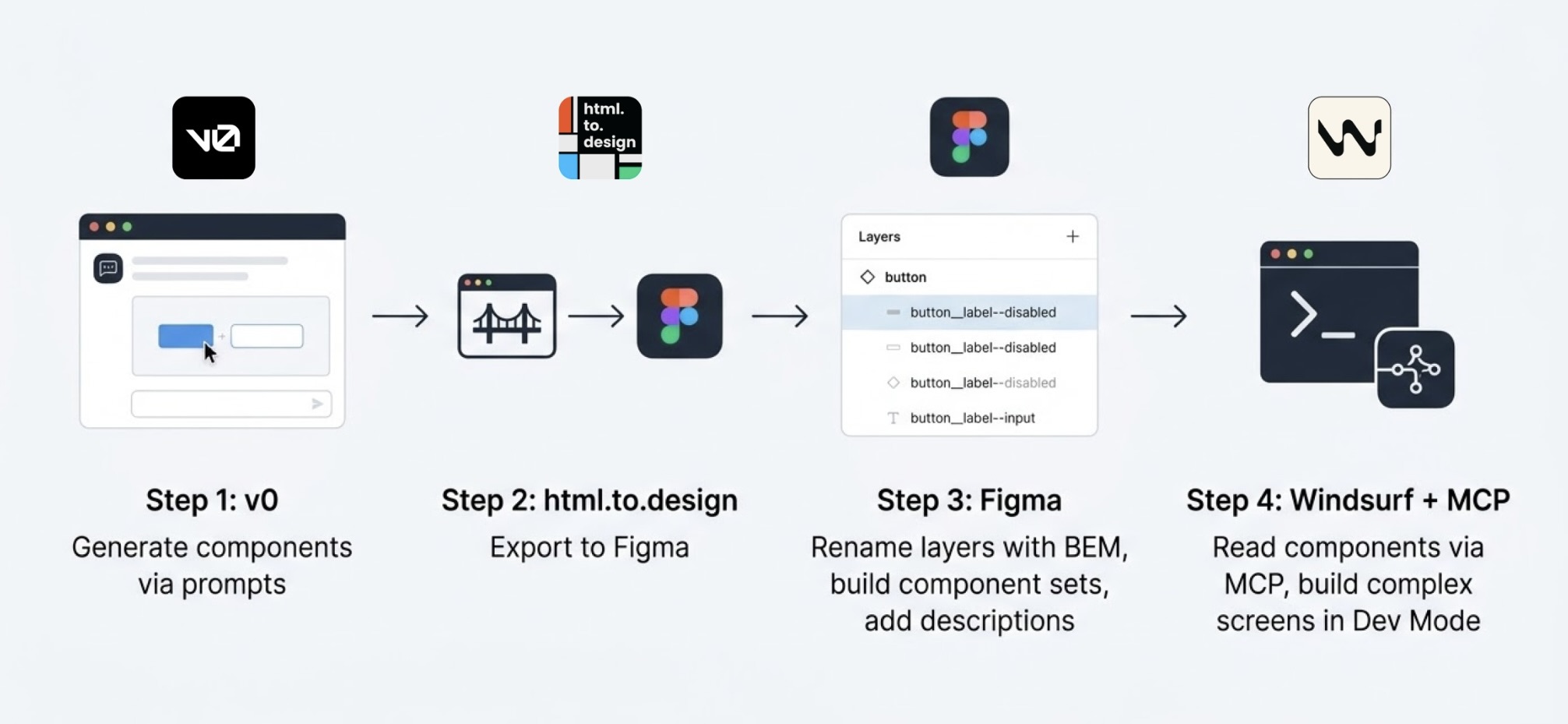

I started in v0, which is essentially a chat interface where you can generate UI components through prompts. I fed it the colors, typographies, and a reference image, and from there it was a back-and-forth: the AI generated, I reacted, adjusted, and pushed until the output matched what I had in my head. And just like that, I started prompting my way through a Design System.

Once a component was ready, I used the html.to.design plugin to bring it into Figma (yes, plugins are still alive!). Think of it as a bridge: the plugin exports designs directly from the browser into a Figma file.

Inside Figma, the intervention was more hands-on. First, I checked that everything was visually consistent with what was defined in v0: colors, typography, styles. Then I used Figma's built-in AI to rename all the component layers using BEM convention (something that would have taken a significant amount of time to do so manually).

BEM, which stands for Block Element Modifier, is a widely adopted naming convention in CSS. It structures layer names hierarchically and predictably, for example: button__label--disabled.

Using it keeps the code clean, readable, and consistent, especially when you're working alongside a developer who needs to understand what came out the other side.

Beyond naming, I also made sure the layer structure would generate the right properties when building component sets in Figma, so that all the variants would be correctly exposed and usable. My team also pointed out that adding descriptions to components and variants was key as context for any agent using them through an MCP.

The last step was connecting everything to Windsurf via MCP. With a frame selected in Dev Mode, Windsurf could read the Figma file and use the components to build more complex screens.

We worked closely with a developer throughout this phase. Not just for the technical knowledge, but because having someone who reads code fluently meant catching things we wouldn't have spotted otherwise. The design role here was direction and supervision: making sure the AI used the components correctly and didn't invent solutions where context was missing.

Every step of the process had a human decision behind it.

An Unexpected Discovery

At one point, before we had any of the naming conventions figured out, I selected a frame and asked Windsurf to build a form using the components inside it, styled to match a specific card. The developer next to me was skeptical until he saw the result, and then he was just as surprised as I was.

What we realized is that the MCP wasn't reading layer names to understand context. It was reading everything inside the frame, even the loose text sitting alongside the components. Good naming is still worth doing. But the MCP doesn't need it to understand what it's looking at.

Learning to Talk to an AI

The more specific and contained your prompt, the better the outcome. We started with the most atomic component: the button, and worked outward from there. Each approved component became context for the next one, so the system gradually picked up the visual language we were building.

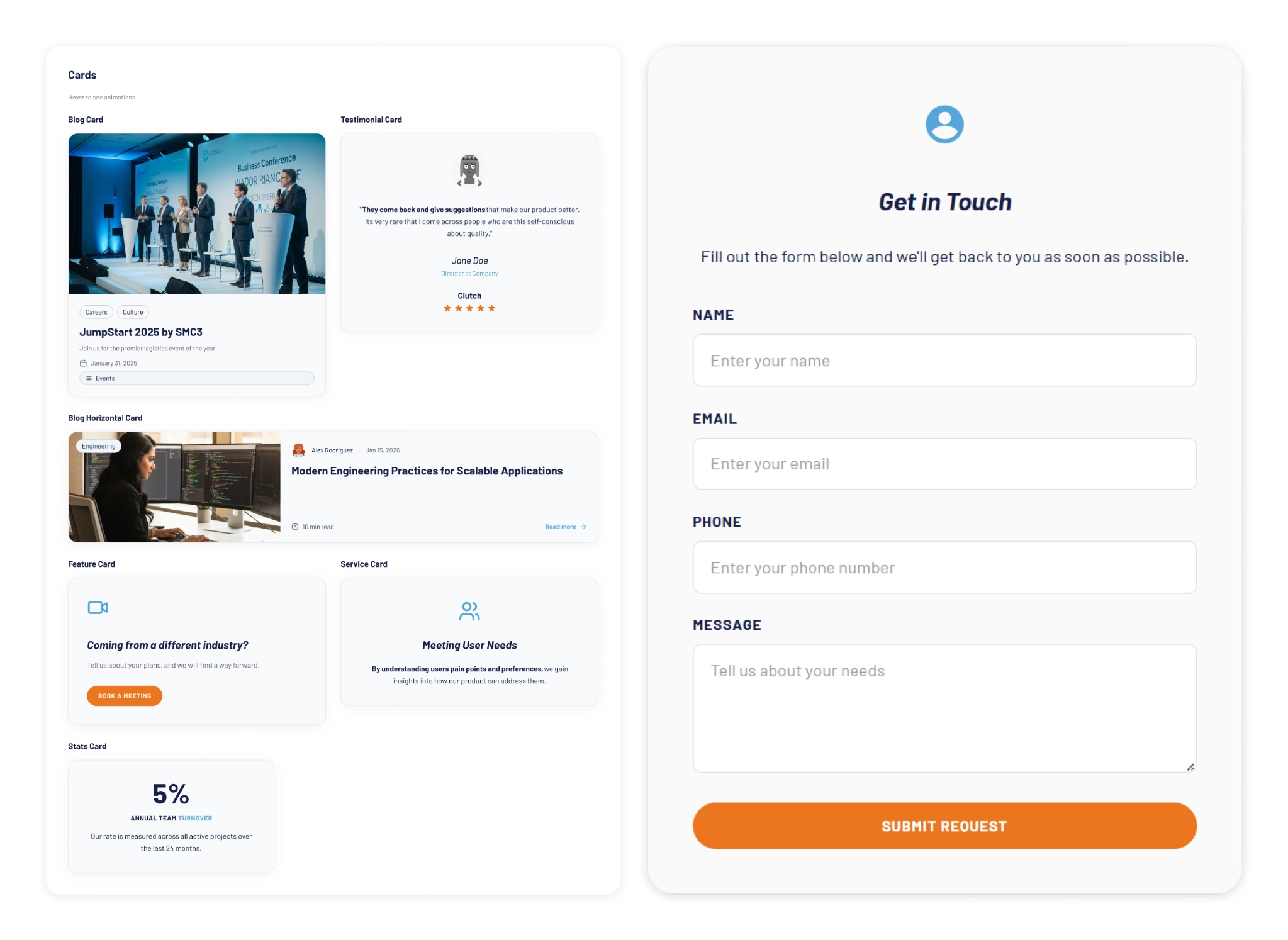

At some point I got ambitious and asked for five cards in a single prompt: blog card, service card, testimonial card, stats card, feature card… structures, states and all. The AI delivered.

Visually, everything looked fine. Then the developer looked at the code and pointed out that all five cards were independent components instead of variants of one. For a design system, that breaks everything.

One correction prompt fixed it. But it was a good reminder: the AI does exactly what you ask, not what you mean. And fixing it after the fact can cost more than getting it right from the start.

Some Things Learned Along the Way

- Precision is key. Natural language is fine when you're asking for a cooking recipe, but when referring to a component, if you say things like "create" instead of "add", you'll probably end up with a whole new set of components instead of additional variants of an existing one.

- The "Frame" is the context: MCPs can read everything inside the frame you select. This is a game-changer. It means the "naming conventions" debate might be shifting. If the AI understands the context visually and structurally, will we still spend hours discussing nomenclature in 2027?

- No matter what happens, you can always roll back in less than 5 minutes and start over.

- Work closely with a developer: they can help you understand MCPs and clear up any code-related doubts. Once you start to grasp their logic, you'll learn very quickly how to prompt in ways that AI actually understands.

- There's nothing to lose by asking the AI to follow a specific naming convention for the code. It keeps everything clean and readable, and it takes no extra effort.

- The AI covers roughly 80% of the work (generation, variations, exploration...), but the remaining 20% is where quality lives, and that part is not delegable. The AI executes. The judgment is still yours. And if you skip the review, you're not saving time: you'll spend it later.

- Context matters more than tooling. What you don't define, the AI will invent. Small components may be resolved well, but large interfaces require more definition from the start. A well-defined system scales. An undefined one generates inconsistencies faster than you can fix them.

- Figma is no longer the mandatory starting point. It's useful as a visual reference, a QA space, or a consolidation layer. But the AI doesn't need it. We still do.

- There's no single right workflow yet. What you do depends on the project. We're in a transition moment where the tools change faster than the standards. The best thing you can do right now is experiment.

What AI Still Can’t Replace

Through all of this, a few things became very clear. These are the parts that didn’t change:

- Knowing when something looks off. The AI generates, but it doesn't notice when the result doesn't feel right. That eye is yours.

- Direction and supervision. The AI used the components we gave it, but without someone supervising it, it invents solutions where there is no context to work from.

- The definition of done is still a human call, whether it's a conversation with a PO, a stakeholder, or just the designer's criteria. There's no prompt for that.

- The context: knowing why certain decisions matter, what a component should communicate, what the user will actually feel. Business knowledge, stakeholder dynamics, unwritten rules, empathy for the end user. These take years to build and live in the people doing the work, not in the tools they use.

My Two Cents

The tools changed, and that gave me the chills, but throughout this experience I found that the designer's role is more alive than ever.

What once took a team weeks can now be prototyped in hours. That’s not a threat; it’s an invitation to get curious.

I'm still figuring a lot of this out, and I suspect most of us are. There's no right workflow yet, and honestly, that's fine. We are in a transition where tools change faster than standards. The best thing you can do is experiment. Don't wait for a "definitive" workflow, it might be obsolete by next month.

Go ahead, try prompting your way through a component. You might be surprised how fast the system starts to take shape.

.png)